Adobe's Firefly AI Assistant is a platform bet, not a feature launch

Adobe's Firefly AI Assistant can run six Creative Cloud apps from one prompt. The 100-tool integration is a platform play — and it only works if the reliability holds.

On 15 April 2026, Adobe opened the public beta of something that does not read like a feature update. It reads like a structural bet on the future of its creative platform.

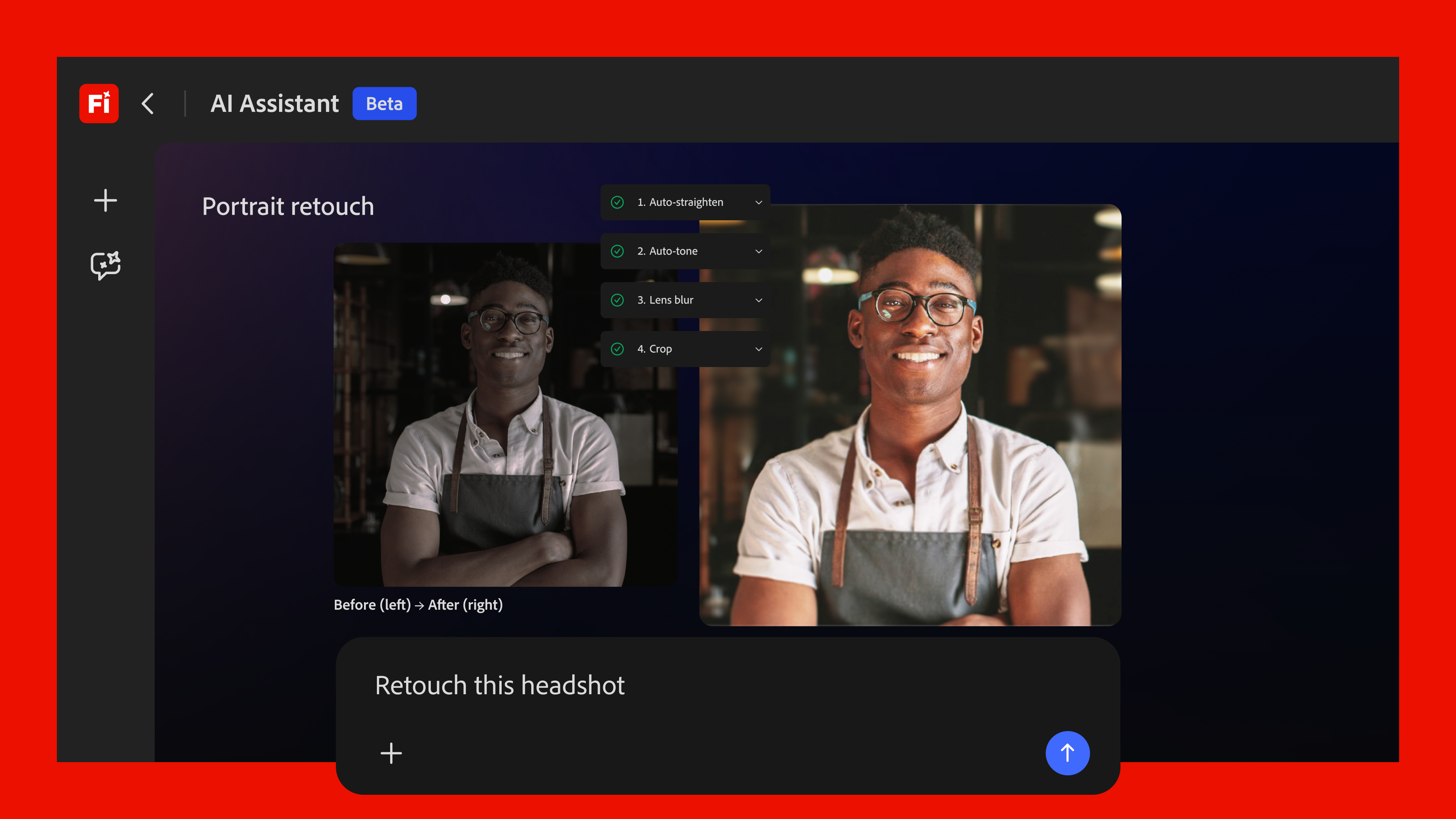

Firefly AI Assistant is a conversational agent that can orchestrate workflows across Photoshop, Premiere Pro, Illustrator, Lightroom, Express, and other Creative Cloud applications from a single natural-language prompt. A designer types “remove the background, apply a cinematic colour grade, export a 16:9 for Instagram” — and the assistant reaches into the relevant tools, across application boundaries, without the user touching a menu. Alexandru Costin, Adobe’s VP of AI and Innovation, told TechCrunch the shift is intentional: “We have the opportunity with the Firefly AI assistant and with agentic experiences to remove some of the friction in learning this large catalog of tools we have and bring all of that value to our customers at their fingertips.”

What separates this from a chatbot bolted onto a single application is the scale of the integration. According to Adobe’s product briefing, the assistant draws on more than 100 tools and skills spanning generative image and video creation, precision editing, layout adaptation, and Frame.io review. More than 60 of those are pro-grade functions — Auto Tone, Generative Fill, Remove Background, Vectorize, and Presets — that have, until now, been locked inside their respective application silos. The company’s framing of what they’re offering is disarmingly simple: “You no longer have to map the process. You can start from the outcome.”

This launch lands during a leadership transition at Adobe, too. CEO Shantanu Narayen, who has led the company since 2007 and oversaw the Creative Cloud subscription pivot that reshaped its economics, is stepping down later in 2026. In that context, the Firefly AI Assistant is the last major platform initiative of the Narayen era — and the first that binds Adobe’s application portfolio together with an agentic layer rather than a licensing model. For his successor, the assistant’s adoption curve over the next 18 months will determine whether the next decade of Creative Cloud is defined by tool depth or AI-driven lock-in.

But the public beta opened into a broader industry conversation about agentic reliability.

Lightrun’s 2026 report on AI productivity tools found that 43 percent of AI-generated code changes required manual debugging in production environments. That figure comes from developer tooling, not creative software, but it names the structural problem agentic systems face when they move from demos to production workloads: the gap between what the model understands and what the user needs is wide, and the cost of closing it falls on the human. An LLM that misinterprets a layer mask in Photoshop or deletes a non-destructive edit stack is not a curiosity. Applying the wrong colour profile to a client deliverable has the same result: a billable hour that evaporated without a paper trail.

Industry analysts tracking enterprise AI adoption have identified the same tension from the buyer side. A survey of 250 agencies published earlier in 2026 found that agentic AI deployments returned a median 11.4× ROI on SEO audit workflows — the highest-ROI category measured. But SEO audits are low-stakes, batch-oriented tasks where an error is recoverable with a re-run. Multi-app creative workflows sit at the other end of the reliability spectrum — state must persist coherently across Photoshop layers and Premiere timelines, then carry through to Illustrator vector paths without breaking. Its own commentary observes that the ROI curve flattens sharply when agentic systems move into domains where precision is non-negotiable. Creative production belongs in that bucket.

Adobe understands this. By design, the public beta is a controlled release. Sandboxed state management is the mechanism: Adobe has been building this layer since at least 2024, when the company began threading Firefly’s generative models into Photoshop’s non-destructive editing pipeline. Rather than piping user prompts to a general-purpose LLM and hoping for the best, Adobe constrains the assistant to a known universe of Creative Cloud APIs with deterministic state tracking. A user request to “generate a fill layer with the brand palette” resolves to a specific API call with defined inputs and outputs, not an open-ended model completion. Whether this constraint architecture holds under the combinatorial complexity of real-world workflows — a designer asking the assistant to composite 40 layers across three applications while respecting a client’s brand guidelines — is precisely the question the beta programme is designed to answer.

There is a subtler risk, though, and it has nothing to do with bugs.

Enterprise Creative Cloud teams that embed the Firefly AI Assistant deep into their production pipelines are, by definition, raising their switching costs. Every workflow the assistant automates becomes a workflow that must be manually rebuilt if the team migrates to a competing platform. Costin’s framing — “bring all of that value to their customers at their fingertips” — describes a feature. But it also describes an architecture of dependency. Value that lives at customers’ fingertips is value that is expensive to walk away from. The economics of the arrangement favour the platform that owns the agent, and the agent, in this case, is woven into the Creative Cloud toolchain at the API level — not layered on top as a chat widget. For Adobe, that is competitive strategy executed at the infrastructure layer. For enterprise teams, it is vendor lock-in by a new name. So the line between the two depends entirely on whether the assistant saves more time than it costs in debugging and whether the team can afford to lose those automations later.

Competitors have responded swiftly, and the stakes are high. Canva launched its own agentic platform — Canva AI 2.0 — within weeks of Adobe’s announcement, and Figma accelerated its AI credits programme alongside a Claude Design integration partnership with Anthropic. On both sides, the logic is unmistakable: if Adobe entrenches an agentic workflow moat inside Creative Cloud, the window for Canva and Figma to compete on depth-of-integration narrows sharply. They each need an agentic story. And they each need it before the Firefly AI Assistant exits beta and becomes a standard part of the Creative Cloud subscription.

For now, the difference is depth. Canva AI 2.0 operates inside Canva’s single-application surface. Figma’s AI credits buy generative fill and layout suggestions within Figma Design. Neither platform spans six professional-grade applications with a unified agentic layer that understands non-destructive editing semantics and colour management across colour spaces — or timeline-based compositing, for that matter. Adobe’s bet — and it is a bet — is that the breadth of the toolchain and the fidelity of the state management underneath it produce a structural advantage competitors cannot replicate by attaching an LLM chat window to a single application. Whether the market agrees will depend on reliability numbers Adobe has not yet released.

So the Firefly AI Assistant is not really an AI launch. It is a platform play that happens to speak natural language. The assistant is the interface. The moat is the 100-plus tools behind it, and the state-management architecture that ties them together.

And the moat leaks if the assistant gets things wrong often enough that professionals stop trusting it. That is the only number that matters, and nobody outside Adobe has seen it yet.

Asha Iyer

AI editor covering the model wars, AU enterprise adoption, and the policy shaping both. Reports from Sydney.